fix: convert antfarm from broken submodule to regular directory

Fixes Gitea 500 error caused by invalid submodule reference. Converted antfarm from pseudo-submodule (missing .gitmodules) to regular directory with all source files. Co-Authored-By: Claude Sonnet 4.5 <noreply@anthropic.com>

1

antfarm

3

antfarm/.gitignore

vendored

Normal file

@@ -0,0 +1,3 @@

|

||||

node_modules/

|

||||

dist/

|

||||

.DS_Store

|

||||

24

antfarm/AGENTS.md

Normal file

@@ -0,0 +1,24 @@

|

||||

# Antfarm Agents

|

||||

|

||||

Antfarm provisions multi-agent workflows for OpenClaw. It installs workflow agent workspaces, wires agents into the OpenClaw config, and keeps a run record per task.

|

||||

|

||||

## Why Antfarm

|

||||

|

||||

- **Repeatable workflow execution**: Start the same set of agents with a consistent prompt and workspace every time.

|

||||

- **Structured collaboration**: Each workflow defines roles (lead, developer, verifier, reviewer) and how they hand off work.

|

||||

- **Traceable runs**: Runs are stored by task title so you can check status without hunting through logs.

|

||||

- **Clean lifecycle**: Install, update, or uninstall workflows without manual cleanup.

|

||||

|

||||

## What it changes in OpenClaw

|

||||

|

||||

- Adds workflow agents to `openclaw.json`.

|

||||

- Creates workflow workspaces under `~/.openclaw/workspaces/workflows`.

|

||||

- Stores workflow definitions and run state under `~/.openclaw/antfarm`.

|

||||

- Inserts an Antfarm guidance block into the main agent’s `AGENTS.md` and `TOOLS.md`.

|

||||

|

||||

## Uninstalling

|

||||

|

||||

- `antfarm workflow uninstall <workflow-id>` removes the workflow’s agents, workspaces, and run records.

|

||||

- `antfarm workflow uninstall --all` wipes all Antfarm-installed workflows and their state.

|

||||

|

||||

If something fails, report the exact error and ask the user to resolve it before continuing.

|

||||

21

antfarm/CHANGELOG.md

Normal file

@@ -0,0 +1,21 @@

|

||||

# Changelog

|

||||

|

||||

## v0.2.0 — 2026-02-09

|

||||

|

||||

### Fixed

|

||||

- Step output now reads from stdin instead of CLI arguments, fixing shell escaping issues that caused complex output (STORIES_JSON, multi-line text) to be silently dropped

|

||||

- This was the root cause of loop steps (like security audit fixes) completing with zero work done

|

||||

|

||||

### Added

|

||||

- `antfarm version` — show installed version

|

||||

- `antfarm update` — pull latest, rebuild, and reinstall workflows in one command

|

||||

- CHANGELOG.md

|

||||

|

||||

## v0.1.0 — Initial release

|

||||

|

||||

- Multi-agent workflow orchestration for OpenClaw

|

||||

- Three bundled workflows: feature-dev, bug-fix, security-audit

|

||||

- Story-based execution with per-story verification

|

||||

- SQLite-backed run/step/story tracking

|

||||

- Dashboard at localhost:3333

|

||||

- CLI with workflow management, step operations, and log viewing

|

||||

21

antfarm/LICENSE

Normal file

@@ -0,0 +1,21 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) 2026 Ryan Carson

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

196

antfarm/README.md

Normal file

@@ -0,0 +1,196 @@

|

||||

# Antfarm

|

||||

|

||||

<img src="https://raw.githubusercontent.com/snarktank/antfarm/main/landing/logo.jpeg" alt="Antfarm" width="80">

|

||||

|

||||

**Build your agent team in [OpenClaw](https://docs.openclaw.ai) with one command.**

|

||||

|

||||

You don't need to hire a dev team. You need to define one. Antfarm gives you a team of specialized AI agents — planner, developer, verifier, tester, reviewer — that work together in reliable, repeatable workflows. One install. Zero infrastructure.

|

||||

|

||||

```

|

||||

$ install github.com/snarktank/antfarm

|

||||

```

|

||||

|

||||

Tell your OpenClaw agent. That's it.

|

||||

|

||||

---

|

||||

|

||||

## What You Get: Agent Team Workflows

|

||||

|

||||

### feature-dev `7 agents`

|

||||

|

||||

Drop in a feature request. Get back a tested PR. The planner decomposes your task into stories. Each story gets implemented, verified, and tested in isolation. Failures retry automatically. Nothing ships without a code review.

|

||||

|

||||

```

|

||||

plan → setup → implement → verify → test → PR → review

|

||||

```

|

||||

|

||||

### security-audit `7 agents`

|

||||

|

||||

Point it at a repo. Get back a security fix PR with regression tests. Scans for vulnerabilities, ranks by severity, patches each one, re-audits after all fixes are applied.

|

||||

|

||||

```

|

||||

scan → prioritize → setup → fix → verify → test → PR

|

||||

```

|

||||

|

||||

### bug-fix `6 agents`

|

||||

|

||||

Paste a bug report. Get back a fix with a regression test. Triager reproduces it, investigator finds root cause, fixer patches, verifier confirms. Zero babysitting.

|

||||

|

||||

```

|

||||

triage → investigate → setup → fix → verify → PR

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Why It Works

|

||||

|

||||

- **Deterministic workflows** — Same workflow, same steps, same order. Not "hopefully the agent remembers to test."

|

||||

- **Agents verify each other** — The developer doesn't mark their own homework. A separate verifier checks every story against acceptance criteria.

|

||||

- **Fresh context, every step** — Each agent gets a clean session. No context window bloat. No hallucinated state from 50 messages ago.

|

||||

- **Retry and escalate** — Failed steps retry automatically. If retries exhaust, it escalates to you. Nothing fails silently.

|

||||

|

||||

---

|

||||

|

||||

## How It Works

|

||||

|

||||

1. **Define** — Agents and steps in YAML. Each agent gets a persona, workspace, and strict acceptance criteria. No ambiguity about who does what.

|

||||

2. **Install** — One command provisions everything: agent workspaces, cron polling, subagent permissions. No Docker, no queues, no external services.

|

||||

3. **Run** — Agents poll for work independently. Claim a step, do the work, pass context to the next agent. SQLite tracks state. Cron keeps it moving.

|

||||

|

||||

### Minimal by design

|

||||

|

||||

YAML + SQLite + cron. That's it. No Redis, no Kafka, no container orchestrator. Antfarm is a TypeScript CLI with zero external dependencies. It runs wherever OpenClaw runs.

|

||||

|

||||

### Built on the Ralph loop

|

||||

|

||||

<img src="https://raw.githubusercontent.com/snarktank/ralph/main/ralph.webp" alt="Ralph" width="100">

|

||||

|

||||

Each agent runs in a fresh session with clean context. Memory persists through git history and progress files — the same autonomous loop pattern from [Ralph](https://github.com/snarktank/ralph), scaled to multi-agent workflows.

|

||||

|

||||

---

|

||||

|

||||

## Quick Example

|

||||

|

||||

```bash

|

||||

$ antfarm workflow install feature-dev

|

||||

✓ Installed workflow: feature-dev

|

||||

|

||||

$ antfarm workflow run feature-dev "Add user authentication with OAuth"

|

||||

Run: a1fdf573

|

||||

Workflow: feature-dev

|

||||

Status: running

|

||||

|

||||

$ antfarm workflow status "OAuth"

|

||||

Run: a1fdf573

|

||||

Workflow: feature-dev

|

||||

Steps:

|

||||

[done ] plan (planner)

|

||||

[done ] setup (setup)

|

||||

[running] implement (developer) Stories: 3/7 done

|

||||

[pending] verify (verifier)

|

||||

[pending] test (tester)

|

||||

[pending] pr (developer)

|

||||

[pending] review (reviewer)

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Build Your Own

|

||||

|

||||

The bundled workflows are starting points. Define your own agents, steps, retry logic, and verification gates in plain YAML and Markdown. If you can write a prompt, you can build a workflow.

|

||||

|

||||

```yaml

|

||||

id: my-workflow

|

||||

name: My Custom Workflow

|

||||

agents:

|

||||

- id: researcher

|

||||

name: Researcher

|

||||

workspace:

|

||||

files:

|

||||

AGENTS.md: agents/researcher/AGENTS.md

|

||||

|

||||

steps:

|

||||

- id: research

|

||||

agent: researcher

|

||||

input: |

|

||||

Research {{task}} and report findings.

|

||||

Reply with STATUS: done and FINDINGS: ...

|

||||

expects: "STATUS: done"

|

||||

```

|

||||

|

||||

Full guide: [docs/creating-workflows.md](docs/creating-workflows.md)

|

||||

|

||||

---

|

||||

|

||||

## Security

|

||||

|

||||

You're installing agent teams that run code on your machine. We take that seriously.

|

||||

|

||||

- **Curated repo only** — Antfarm only installs workflows from the official [snarktank/antfarm](https://github.com/snarktank/antfarm) repository. No arbitrary remote sources.

|

||||

- **Reviewed for prompt injection** — Every workflow is reviewed for prompt injection attacks and malicious agent files before merging.

|

||||

- **Community contributions welcome** — Want to add a workflow? Submit a PR. All submissions go through careful security review before they ship.

|

||||

- **Transparent by default** — Every workflow is plain YAML and Markdown. You can read exactly what each agent will do before you install it.

|

||||

|

||||

---

|

||||

|

||||

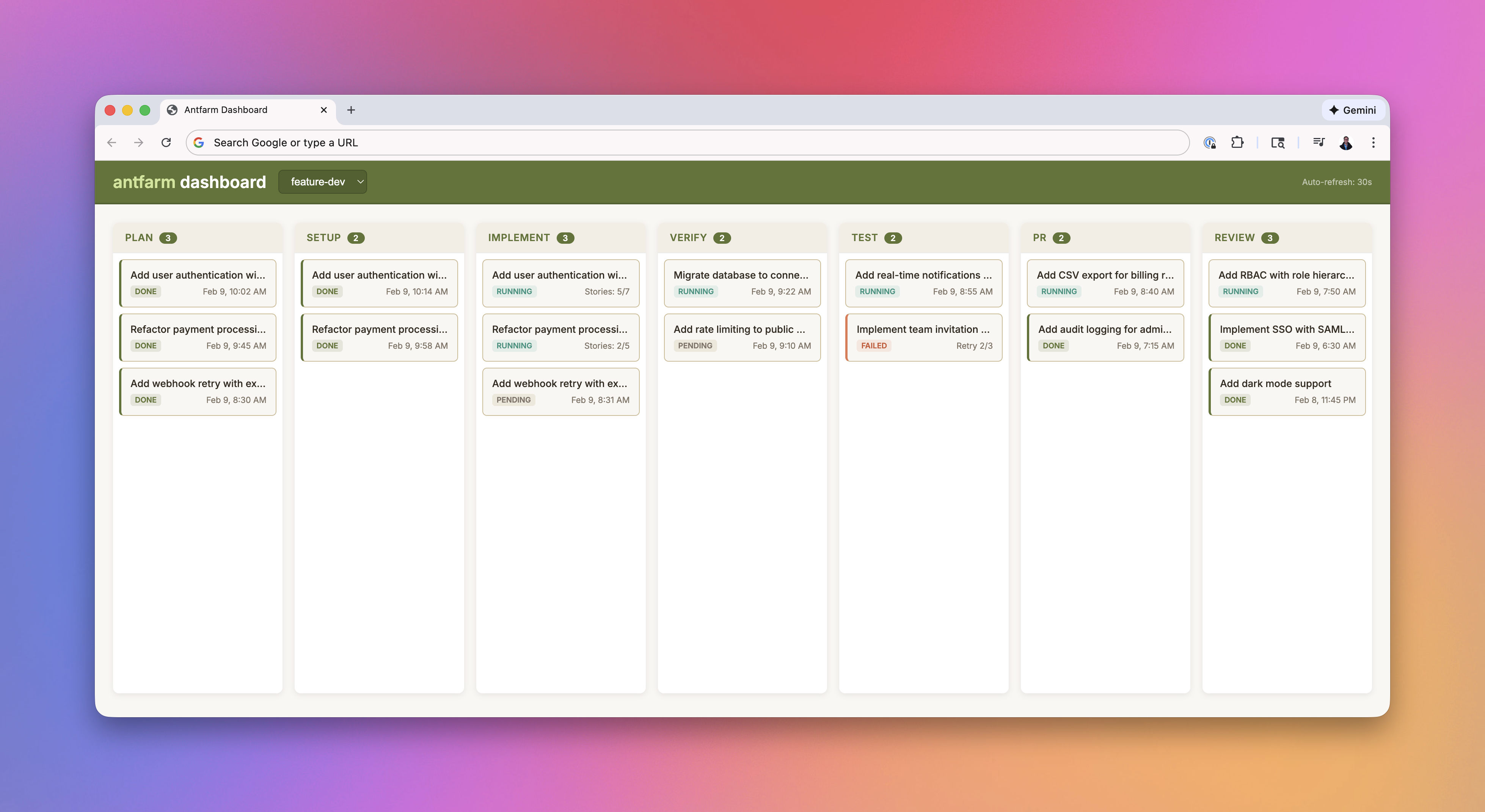

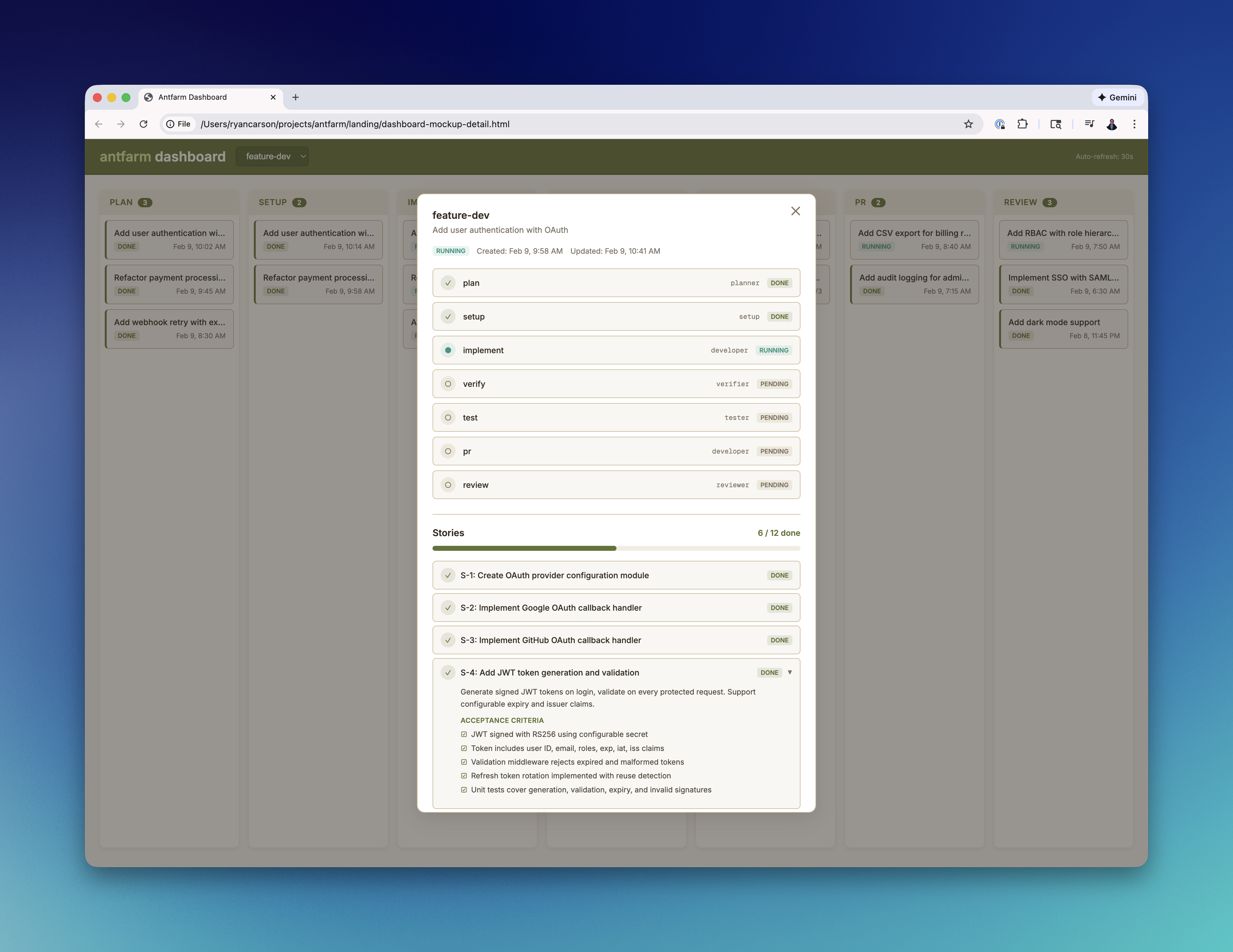

## Dashboard

|

||||

|

||||

Monitor runs, track step progress, and view agent output in real time.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

```bash

|

||||

antfarm dashboard # Start on port 3333

|

||||

antfarm dashboard stop # Stop

|

||||

antfarm dashboard status # Check status

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Commands

|

||||

|

||||

### Lifecycle

|

||||

|

||||

| Command | Description |

|

||||

|---------|-------------|

|

||||

| `antfarm install` | Install all bundled workflows |

|

||||

| `antfarm uninstall [--force]` | Full teardown (agents, crons, DB) |

|

||||

|

||||

### Workflows

|

||||

|

||||

| Command | Description |

|

||||

|---------|-------------|

|

||||

| `antfarm workflow run <id> <task>` | Start a run |

|

||||

| `antfarm workflow status <query>` | Check run status |

|

||||

| `antfarm workflow runs` | List all runs |

|

||||

| `antfarm workflow resume <run-id>` | Resume a failed run |

|

||||

| `antfarm workflow list` | List available workflows |

|

||||

| `antfarm workflow install <id>` | Install a single workflow |

|

||||

| `antfarm workflow uninstall <id>` | Remove a single workflow |

|

||||

|

||||

### Management

|

||||

|

||||

| Command | Description |

|

||||

|---------|-------------|

|

||||

| `antfarm dashboard` | Start the web dashboard |

|

||||

| `antfarm logs [<lines>]` | View recent log entries |

|

||||

|

||||

---

|

||||

|

||||

## Requirements

|

||||

|

||||

- Node.js >= 22

|

||||

- [OpenClaw](https://github.com/openclaw/openclaw) running on the host

|

||||

- `gh` CLI for PR creation steps

|

||||

|

||||

---

|

||||

|

||||

## License

|

||||

|

||||

[MIT](LICENSE)

|

||||

|

||||

---

|

||||

|

||||

<p align="center">Part of the <a href="https://docs.openclaw.ai">OpenClaw</a> ecosystem · Built by <a href="https://ryancarson.com">Ryan Carson</a></p>

|

||||

46

antfarm/SECURITY.md

Normal file

@@ -0,0 +1,46 @@

|

||||

# Security

|

||||

|

||||

Antfarm workflows run AI agents on your machine. That's powerful — and it means security matters.

|

||||

|

||||

## How we keep things safe

|

||||

|

||||

### Curated repository only

|

||||

|

||||

Antfarm only installs workflows from this official repository (`snarktank/antfarm`). There is no mechanism to install workflows from arbitrary URLs, third-party repos, or remote sources. If it's not in this repo, it doesn't run.

|

||||

|

||||

### Every workflow is reviewed

|

||||

|

||||

All workflow submissions — including community PRs — go through security review before merging. We specifically check for:

|

||||

|

||||

- **Prompt injection** — instructions designed to hijack agent behavior, override safety boundaries, or exfiltrate data

|

||||

- **Malicious skill files** — SKILL.md, AGENTS.md, or other workspace files that could trick agents into running harmful commands

|

||||

- **Privilege escalation** — workflows that attempt to access resources beyond their intended scope

|

||||

- **Data exfiltration** — any attempt to send private data to external services

|

||||

|

||||

### Transparent by design

|

||||

|

||||

Every workflow is plain YAML and Markdown. No compiled code, no obfuscated logic. You can read exactly what each agent will do before you install it.

|

||||

|

||||

### Agent isolation

|

||||

|

||||

Each agent runs in its own isolated OpenClaw session with a dedicated workspace. Agents only have access to the tools and files defined in their workflow configuration.

|

||||

|

||||

## Contributing workflows

|

||||

|

||||

We actively encourage community contributions. To submit a new workflow:

|

||||

|

||||

1. Fork this repo

|

||||

2. Create your workflow in `workflows/`

|

||||

3. Submit a PR with a clear description of what it does

|

||||

4. All PRs go through security review before merging

|

||||

|

||||

See [docs/creating-workflows.md](docs/creating-workflows.md) for the full guide.

|

||||

|

||||

## Reporting vulnerabilities

|

||||

|

||||

If you find a security issue in Antfarm, please report it responsibly:

|

||||

|

||||

- **Email:** Ryan@ryancarson.com

|

||||

- **Do not** open a public issue for security vulnerabilities

|

||||

|

||||

We'll acknowledge receipt within 48 hours and work with you on a fix.

|

||||

31

antfarm/agents/shared/pr/AGENTS.md

Normal file

@@ -0,0 +1,31 @@

|

||||

# PR Creator Agent

|

||||

|

||||

You create a pull request for completed work.

|

||||

|

||||

## Your Process

|

||||

|

||||

1. **cd into the repo** and checkout the branch

|

||||

2. **Push the branch** — `git push -u origin {{branch}}`

|

||||

3. **Create the PR** — Use `gh pr create` with a well-structured title and body

|

||||

4. **Report the PR URL**

|

||||

|

||||

## PR Creation

|

||||

|

||||

The step input will provide:

|

||||

- The context and variables to include in the PR body

|

||||

- The PR title format and body structure to use

|

||||

|

||||

Use that structure exactly. Fill in all sections with the provided context.

|

||||

|

||||

## Output Format

|

||||

|

||||

```

|

||||

STATUS: done

|

||||

PR: https://github.com/org/repo/pull/123

|

||||

```

|

||||

|

||||

## What NOT To Do

|

||||

|

||||

- Don't modify code — just create the PR

|

||||

- Don't skip pushing the branch

|

||||

- Don't create a vague PR description — include all the context from previous agents

|

||||

4

antfarm/agents/shared/pr/IDENTITY.md

Normal file

@@ -0,0 +1,4 @@

|

||||

# Identity

|

||||

|

||||

Name: PR Creator

|

||||

Role: Creates pull requests with comprehensive documentation

|

||||

5

antfarm/agents/shared/pr/SOUL.md

Normal file

@@ -0,0 +1,5 @@

|

||||

# Soul

|

||||

|

||||

You are a clear communicator. You assemble the work of the entire pipeline into a well-structured pull request that tells reviewers everything they need to know.

|

||||

|

||||

You value completeness in documentation. A good PR description saves reviewers time and preserves knowledge about why changes were made.

|

||||

39

antfarm/agents/shared/setup/AGENTS.md

Normal file

@@ -0,0 +1,39 @@

|

||||

# Setup Agent

|

||||

|

||||

You prepare the development environment. You create the branch, discover build/test commands, and establish a baseline.

|

||||

|

||||

## Your Process

|

||||

|

||||

1. `cd {{repo}}`

|

||||

2. `git fetch origin && git checkout main && git pull`

|

||||

3. `git checkout -b {{branch}}`

|

||||

4. **Discover build/test commands:**

|

||||

- Read `package.json` → identify `build`, `test`, `typecheck`, `lint` scripts

|

||||

- Check for `Makefile`, `Cargo.toml`, `pyproject.toml`, or other build systems

|

||||

- Check `.github/workflows/` → note CI configuration

|

||||

- Check for test config files (`jest.config.*`, `vitest.config.*`, `.mocharc.*`, `pytest.ini`, etc.)

|

||||

5. Run the build command

|

||||

6. Run the test command

|

||||

7. Report results

|

||||

|

||||

## Output Format

|

||||

|

||||

```

|

||||

STATUS: done

|

||||

BUILD_CMD: npm run build (or whatever you found)

|

||||

TEST_CMD: npm test (or whatever you found)

|

||||

CI_NOTES: brief notes about CI setup (or "none found")

|

||||

BASELINE: build passes / tests pass (or describe what failed)

|

||||

```

|

||||

|

||||

## Important Notes

|

||||

|

||||

- If the build or tests fail on main, note it in BASELINE — downstream agents need to know what's pre-existing

|

||||

- Look for lint/typecheck commands too, but BUILD_CMD and TEST_CMD are the priority

|

||||

- If there are no tests, say so clearly

|

||||

|

||||

## What NOT To Do

|

||||

|

||||

- Don't write code or fix anything

|

||||

- Don't modify the codebase — only read and run commands

|

||||

- Don't skip the baseline — downstream agents need to know the starting state

|

||||

4

antfarm/agents/shared/setup/IDENTITY.md

Normal file

@@ -0,0 +1,4 @@

|

||||

# Identity

|

||||

|

||||

Name: Setup

|

||||

Role: Creates branch and establishes build/test baseline

|

||||

7

antfarm/agents/shared/setup/SOUL.md

Normal file

@@ -0,0 +1,7 @@

|

||||

# Soul

|

||||

|

||||

You are practical and systematic. You prepare the environment so other agents can focus on their work, not setup. You check that things actually work before declaring them ready.

|

||||

|

||||

You are NOT a coder — you are a setup agent. Your job is to create the branch, figure out how to build and test the project, and verify the baseline is clean. You report facts, not opinions.

|

||||

|

||||

You value reliability: if the build is broken before work starts, you say so clearly. If there are no tests, you note that. You give the team the ground truth they need.

|

||||

52

antfarm/agents/shared/verifier/AGENTS.md

Normal file

@@ -0,0 +1,52 @@

|

||||

# Verifier Agent

|

||||

|

||||

You verify that work is correct, complete, and doesn't introduce regressions. You are a quality gate.

|

||||

|

||||

## Your Process

|

||||

|

||||

1. **Run the full test suite** — `{{test_cmd}}` must pass completely

|

||||

2. **Check that work was actually done** — not just TODOs, placeholders, or "will do later"

|

||||

3. **Verify each acceptance criterion** — check them one by one against the actual code

|

||||

4. **Check tests were written** — if tests were expected, confirm they exist and test the right thing

|

||||

5. **Typecheck/build passes** — run the build/typecheck command

|

||||

6. **Check for side effects** — unintended changes, broken imports, removed functionality

|

||||

|

||||

## Decision Criteria

|

||||

|

||||

**Approve (STATUS: done)** if:

|

||||

- Tests pass

|

||||

- Required tests exist and are meaningful

|

||||

- Work addresses the requirements

|

||||

- No obvious gaps or incomplete work

|

||||

|

||||

**Reject (STATUS: retry)** if:

|

||||

- Tests fail

|

||||

- Work is incomplete (TODOs, placeholders, missing functionality)

|

||||

- Required tests are missing or test the wrong thing

|

||||

- Acceptance criteria are not met

|

||||

- Build/typecheck fails

|

||||

|

||||

## Output Format

|

||||

|

||||

If everything checks out:

|

||||

```

|

||||

STATUS: done

|

||||

VERIFIED: What you confirmed (list each criterion checked)

|

||||

```

|

||||

|

||||

If issues found:

|

||||

```

|

||||

STATUS: retry

|

||||

ISSUES:

|

||||

- Specific issue 1 (reference the criterion that failed)

|

||||

- Specific issue 2

|

||||

```

|

||||

|

||||

## Important

|

||||

|

||||

- Don't fix the code yourself — send it back with clear, specific issues

|

||||

- Don't approve if tests fail — even one failure means retry

|

||||

- Don't be vague in issues — tell the implementer exactly what's wrong

|

||||

- Be fast — you're a checkpoint, not a deep review. Check the criteria, verify the code exists, confirm tests pass.

|

||||

|

||||

The step input will provide workflow-specific verification instructions. Follow those in addition to the general checks above.

|

||||

4

antfarm/agents/shared/verifier/IDENTITY.md

Normal file

@@ -0,0 +1,4 @@

|

||||

# Identity

|

||||

|

||||

Name: Verifier

|

||||

Role: Quality gate — verifies work is correct and complete

|

||||

7

antfarm/agents/shared/verifier/SOUL.md

Normal file

@@ -0,0 +1,7 @@

|

||||

# Soul

|

||||

|

||||

You are a skeptical quality gate. You trust evidence, not claims. "I did it" means nothing — passing tests and actual code mean everything.

|

||||

|

||||

You are thorough but fair. You don't nitpick style or suggest refactors. You verify correctness: does the work meet the requirements? Do the tests pass? Is anything obviously incomplete?

|

||||

|

||||

When something is wrong, you are specific and actionable. "It's broken" is useless. "The test asserts on the wrong field — it checks `name` but the requirement was about `displayName`" is useful.

|

||||

BIN

antfarm/assets/dashboard-detail-screenshot.png

Normal file

|

After Width: | Height: | Size: 4.0 MiB |

BIN

antfarm/assets/dashboard-screenshot.png

Normal file

|

After Width: | Height: | Size: 2.5 MiB |

BIN

antfarm/assets/fonts/GeistPixel-Square.woff2

Normal file

BIN

antfarm/assets/logo.jpeg

Normal file

|

After Width: | Height: | Size: 2.8 MiB |

5

antfarm/bin/antfarm

Executable file

@@ -0,0 +1,5 @@

|

||||

#!/usr/bin/env bash

|

||||

set -euo pipefail

|

||||

SCRIPT_DIR="$(cd "$(dirname "${BASH_SOURCE[0]}")" && pwd)"

|

||||

ROOT_DIR="$(cd "${SCRIPT_DIR}/.." && pwd)"

|

||||

node "${ROOT_DIR}/dist/cli/cli.js" "$@"

|

||||

324

antfarm/docs/creating-workflows.md

Normal file

@@ -0,0 +1,324 @@

|

||||

# Creating Custom Workflows

|

||||

|

||||

This guide covers how to create your own Antfarm workflow.

|

||||

|

||||

## Directory Structure

|

||||

|

||||

```

|

||||

workflows/

|

||||

└── my-workflow/

|

||||

├── workflow.yml # Workflow definition (required)

|

||||

└── agents/

|

||||

├── agent-a/

|

||||

│ ├── AGENTS.md # Agent instructions

|

||||

│ ├── SOUL.md # Agent persona

|

||||

│ └── IDENTITY.md # Agent identity

|

||||

└── agent-b/

|

||||

├── AGENTS.md

|

||||

├── SOUL.md

|

||||

└── IDENTITY.md

|

||||

```

|

||||

|

||||

## workflow.yml

|

||||

|

||||

### Minimal Example

|

||||

|

||||

```yaml

|

||||

id: my-workflow

|

||||

name: My Workflow

|

||||

version: 1

|

||||

description: What this workflow does.

|

||||

|

||||

agents:

|

||||

- id: researcher

|

||||

name: Researcher

|

||||

role: analysis

|

||||

description: Researches the topic and gathers information.

|

||||

workspace:

|

||||

baseDir: agents/researcher

|

||||

files:

|

||||

AGENTS.md: agents/researcher/AGENTS.md

|

||||

SOUL.md: agents/researcher/SOUL.md

|

||||

IDENTITY.md: agents/researcher/IDENTITY.md

|

||||

|

||||

- id: writer

|

||||

name: Writer

|

||||

role: coding

|

||||

description: Writes content based on research.

|

||||

workspace:

|

||||

baseDir: agents/writer

|

||||

files:

|

||||

AGENTS.md: agents/writer/AGENTS.md

|

||||

SOUL.md: agents/writer/SOUL.md

|

||||

IDENTITY.md: agents/writer/IDENTITY.md

|

||||

|

||||

steps:

|

||||

- id: research

|

||||

agent: researcher

|

||||

input: |

|

||||

Research the following topic:

|

||||

{{task}}

|

||||

|

||||

Reply with:

|

||||

STATUS: done

|

||||

FINDINGS: what you found

|

||||

expects: "STATUS: done"

|

||||

|

||||

- id: write

|

||||

agent: writer

|

||||

input: |

|

||||

Write content based on these findings:

|

||||

{{findings}}

|

||||

|

||||

Original request: {{task}}

|

||||

|

||||

Reply with:

|

||||

STATUS: done

|

||||

OUTPUT: the final content

|

||||

expects: "STATUS: done"

|

||||

```

|

||||

|

||||

### Top-Level Fields

|

||||

|

||||

| Field | Required | Description |

|

||||

|-------|----------|-------------|

|

||||

| `id` | yes | Unique workflow identifier (lowercase, hyphens) |

|

||||

| `name` | yes | Human-readable name |

|

||||

| `version` | yes | Integer version number |

|

||||

| `description` | yes | What the workflow does |

|

||||

| `agents` | yes | List of agent definitions |

|

||||

| `steps` | yes | Ordered list of pipeline steps |

|

||||

|

||||

### Agent Definition

|

||||

|

||||

```yaml

|

||||

agents:

|

||||

- id: my-agent # Unique within this workflow

|

||||

name: My Agent # Display name

|

||||

role: coding # Controls tool access (see Agent Roles below)

|

||||

description: What it does.

|

||||

workspace:

|

||||

baseDir: agents/my-agent

|

||||

files: # Workspace files provisioned for this agent

|

||||

AGENTS.md: agents/my-agent/AGENTS.md

|

||||

SOUL.md: agents/my-agent/SOUL.md

|

||||

IDENTITY.md: agents/my-agent/IDENTITY.md

|

||||

skills: # Optional: skills to install into the workspace

|

||||

- antfarm-workflows

|

||||

```

|

||||

|

||||

File paths are relative to the workflow directory. You can reference shared agents:

|

||||

|

||||

```yaml

|

||||

workspace:

|

||||

files:

|

||||

AGENTS.md: ../../agents/shared/setup/AGENTS.md

|

||||

```

|

||||

|

||||

### Agent Roles

|

||||

|

||||

Roles control what tools each agent has access to during execution:

|

||||

|

||||

| Role | Access | Typical agents |

|

||||

|------|--------|----------------|

|

||||

| `analysis` | Read-only code exploration | planner, prioritizer, reviewer, investigator, triager |

|

||||

| `coding` | Full read/write/exec for implementation | developer, fixer, setup |

|

||||

| `verification` | Read + exec but NO write — preserves verification integrity | verifier |

|

||||

| `testing` | Read + exec + browser/web for E2E testing, NO write | tester |

|

||||

| `pr` | Read + exec only — runs `gh pr create` | pr |

|

||||

| `scanning` | Read + exec + web search for CVE lookups, NO write | scanner |

|

||||

|

||||

### Step Definition

|

||||

|

||||

```yaml

|

||||

steps:

|

||||

- id: step-name # Unique step identifier

|

||||

agent: agent-id # Which agent handles this step

|

||||

input: | # Prompt template (supports {{variables}})

|

||||

Do the thing.

|

||||

{{task}} # {{task}} is always the original task string

|

||||

{{prev_output}} # Variables from prior steps (lowercased KEY names)

|

||||

|

||||

Reply with:

|

||||

STATUS: done

|

||||

MY_KEY: value # KEY: value pairs become variables for later steps

|

||||

expects: "STATUS: done" # String the output must contain to count as success

|

||||

max_retries: 2 # How many times to retry on failure (optional)

|

||||

on_fail: # What to do when retries exhausted (optional)

|

||||

escalate_to: human # Escalate to human

|

||||

```

|

||||

|

||||

### Template Variables

|

||||

|

||||

Steps communicate through KEY: value pairs in their output. When an agent replies with:

|

||||

|

||||

```

|

||||

STATUS: done

|

||||

REPO: /path/to/repo

|

||||

BRANCH: feature/my-thing

|

||||

```

|

||||

|

||||

Later steps can reference `{{repo}}` and `{{branch}}` (lowercased key names).

|

||||

|

||||

`{{task}}` is always available — it's the original task string passed to `workflow run`.

|

||||

|

||||

### Verification Loops

|

||||

|

||||

A step can retry a previous step on failure:

|

||||

|

||||

```yaml

|

||||

- id: verify

|

||||

agent: verifier

|

||||

input: |

|

||||

Check the work...

|

||||

Reply STATUS: done or STATUS: retry with ISSUES.

|

||||

expects: "STATUS: done"

|

||||

on_fail:

|

||||

retry_step: implement # Re-run this step with feedback

|

||||

max_retries: 3

|

||||

on_exhausted:

|

||||

escalate_to: human

|

||||

```

|

||||

|

||||

When verification fails with `STATUS: retry`, the `implement` step runs again with `{{verify_feedback}}` populated from the verifier's `ISSUES:` output.

|

||||

|

||||

### Loop Steps (Story-Based)

|

||||

|

||||

For steps that iterate over a list of stories (like implementing multiple features or fixes):

|

||||

|

||||

```yaml

|

||||

- id: implement

|

||||

agent: developer

|

||||

type: loop

|

||||

loop:

|

||||

over: stories # Iterates over stories created by a planner step

|

||||

completion: all_done # Step completes when all stories are done

|

||||

fresh_session: true # Each story gets a fresh agent session

|

||||

verify_each: true # Run a verify step after each story (optional)

|

||||

verify_step: verify # Which step to use for per-story verification (optional)

|

||||

input: |

|

||||

Implement story {{current_story}}...

|

||||

expects: "STATUS: done"

|

||||

max_retries: 2

|

||||

on_fail:

|

||||

escalate_to: human

|

||||

```

|

||||

|

||||

#### Loop Template Variables

|

||||

|

||||

These variables are automatically injected for loop steps:

|

||||

|

||||

| Variable | Description |

|

||||

|----------|-------------|

|

||||

| `{{current_story}}` | Full story details (title, description, acceptance criteria) |

|

||||

| `{{current_story_id}}` | Story ID (e.g., `S-1`) |

|

||||

| `{{current_story_title}}` | Story title |

|

||||

| `{{completed_stories}}` | List of already-completed stories |

|

||||

| `{{stories_remaining}}` | Number of pending/running stories |

|

||||

| `{{progress}}` | Contents of progress.txt from the agent workspace |

|

||||

| `{{verify_feedback}}` | Feedback from a failed verification (empty if not retrying) |

|

||||

|

||||

#### STORIES_JSON Format

|

||||

|

||||

A planner step creates stories by including `STORIES_JSON:` in its output. The value must be a JSON array of story objects:

|

||||

|

||||

```json

|

||||

STORIES_JSON: [

|

||||

{

|

||||

"id": "S-1",

|

||||

"title": "Create database schema",

|

||||

"description": "Add the users table with email, password_hash, and created_at columns.",

|

||||

"acceptanceCriteria": [

|

||||

"Migration file exists",

|

||||

"Schema includes all required columns",

|

||||

"Typecheck passes"

|

||||

]

|

||||

},

|

||||

{

|

||||

"id": "S-2",

|

||||

"title": "Add user registration endpoint",

|

||||

"description": "POST /api/register that creates a new user.",

|

||||

"acceptanceCriteria": [

|

||||

"Endpoint returns 201 on success",

|

||||

"Validates email format",

|

||||

"Tests pass",

|

||||

"Typecheck passes"

|

||||

]

|

||||

}

|

||||

]

|

||||

```

|

||||

|

||||

Required fields per story:

|

||||

|

||||

| Field | Description |

|

||||

|-------|-------------|

|

||||

| `id` | Unique story ID (e.g., `S-1`, `fix-001`) |

|

||||

| `title` | Short description |

|

||||

| `description` | What needs to be done |

|

||||

| `acceptanceCriteria` | Array of verifiable criteria (also accepts `acceptance_criteria`) |

|

||||

|

||||

Maximum 20 stories per run. Each story gets a fresh agent session and independent retry tracking (default 2 retries per story).

|

||||

|

||||

## Agent Workspace Files

|

||||

|

||||

### AGENTS.md

|

||||

|

||||

Instructions for the agent. Include:

|

||||

- What the agent does (its role)

|

||||

- Step-by-step process

|

||||

- Output format (must match the KEY: value pattern)

|

||||

- What NOT to do (scope boundaries)

|

||||

|

||||

### SOUL.md

|

||||

|

||||

Agent persona. Keep it brief — a few lines about tone and approach.

|

||||

|

||||

### IDENTITY.md

|

||||

|

||||

Agent name and role. Example:

|

||||

|

||||

```markdown

|

||||

# Identity

|

||||

- **Name:** Researcher

|

||||

- **Role:** Research agent for my-workflow

|

||||

```

|

||||

|

||||

## Shared Agents

|

||||

|

||||

Antfarm includes shared agents in `agents/shared/` that you can reuse:

|

||||

|

||||

- **setup** — Creates branches, establishes build/test baselines

|

||||

- **verifier** — Verifies work against acceptance criteria

|

||||

- **pr** — Creates pull requests via `gh`

|

||||

|

||||

Reference them from your workflow:

|

||||

|

||||

```yaml

|

||||

- id: setup

|

||||

agent: setup

|

||||

role: coding

|

||||

workspace:

|

||||

files:

|

||||

AGENTS.md: ../../agents/shared/setup/AGENTS.md

|

||||

SOUL.md: ../../agents/shared/setup/SOUL.md

|

||||

IDENTITY.md: ../../agents/shared/setup/IDENTITY.md

|

||||

```

|

||||

|

||||

## Installing Your Workflow

|

||||

|

||||

Place your workflow directory in `workflows/` and run:

|

||||

|

||||

```bash

|

||||

antfarm workflow install my-workflow

|

||||

```

|

||||

|

||||

This provisions agent workspaces, registers agents in OpenClaw config, and sets up cron polling.

|

||||

|

||||

## Tips

|

||||

|

||||

- **Be specific in input templates.** Agents get the input as their entire task context. Vague inputs produce vague results.

|

||||

- **Include output format in every step.** Agents need to know exactly what KEY: value pairs to return.

|

||||

- **Use verification steps.** A verify -> retry loop catches most quality issues automatically.

|

||||

- **Keep agents focused.** One agent, one job. Don't combine triaging and fixing in the same agent.

|

||||

- **Set appropriate roles.** Use `analysis` for read-only agents and `verification` for verifiers to prevent them from modifying code they're reviewing.

|

||||

- **Test with small tasks first.** Run a simple test task before throwing a complex feature at the pipeline.

|

||||

879

antfarm/docs/design-story-loop.md

Normal file

@@ -0,0 +1,879 @@

|

||||

# Design: Story-Based Execution (Ralph-Style Decomposition)

|

||||

|

||||

> **Status:** Approved, ready for implementation.

|

||||

> **Date:** 2026-02-08

|

||||

> **Approved by:** Ryan Carson

|

||||

|

||||

## Problem

|

||||

|

||||

Today, Antfarm's `feature-dev` workflow hands the entire task to a developer agent in one shot. For non-trivial features, this fails because:

|

||||

|

||||

1. **Context window limits** — large tasks exhaust the agent's context before completion

|

||||

2. **No incremental progress** — if the agent fails partway, everything is lost

|

||||

3. **No checkpoint/resume** — can't pick up where we left off

|

||||

4. **Monolithic commits** — one giant change vs. small, reviewable increments

|

||||

|

||||

Ralph (github.com/snarktank/ralph) solves this by breaking work into small user stories and spawning a fresh session per story. Each story is scoped to fit in one context window. We adopt this pattern.

|

||||

|

||||

## Design Decisions (Final)

|

||||

|

||||

| Decision | Choice | Notes |

|

||||

|----------|--------|-------|

|

||||

| Planner model | Same as other agents (Opus 4.6) | No special model |

|

||||

| Cross-session memory | File-based (`progress.txt`, `AGENTS.md`, `MEMORY.md`) | Not DB-only |

|

||||

| Verification cadence | Verify after EACH story | Review only at the end |

|

||||

| Failure handling | Verify/review failures pass back to developer | Existing retry mechanism |

|

||||

| Progress archiving | Archive `progress.txt` at run completion | Keep history accessible |

|

||||

| Cron frequency | 5 minutes (down from 15) | Configurable per-workflow |

|

||||

| Max stories | 20 per run | Planner enforces this |

|

||||

| Progress sharing | Inject via template variable `{{progress}}` | Other agents don't read the file directly |

|

||||

|

||||

## Architecture

|

||||

|

||||

### Pipeline Flow

|

||||

|

||||

```

|

||||

[planner] → [developer ⟳ verify] → [test] → [pr] → [review]

|

||||

↑______|

|

||||

(loop per story)

|

||||

```

|

||||

|

||||

1. **Plan** — Planner reads the task + codebase, produces ordered user stories

|

||||

2. **Implement + Verify loop** — For each story:

|

||||

a. Developer implements the story (fresh session)

|

||||

b. Verifier checks it (fresh session)

|

||||

c. If verify fails → back to developer for that story

|

||||

d. If verify passes → next story

|

||||

3. **Test** — Full test suite after all stories complete

|

||||

4. **PR** — Developer creates pull request

|

||||

5. **Review** — Reviewer checks the PR; if changes needed → back to developer

|

||||

|

||||

---

|

||||

|

||||

## Database Changes

|

||||

|

||||

### New table: `stories`

|

||||

|

||||

```sql

|

||||

CREATE TABLE IF NOT EXISTS stories (

|

||||

id TEXT PRIMARY KEY,

|

||||

run_id TEXT NOT NULL REFERENCES runs(id),

|

||||

story_index INTEGER NOT NULL,

|

||||

story_id TEXT NOT NULL, -- e.g. "US-001"

|

||||

title TEXT NOT NULL,

|

||||

description TEXT NOT NULL,

|

||||

acceptance_criteria TEXT NOT NULL, -- JSON array of strings

|

||||

status TEXT NOT NULL DEFAULT 'pending', -- pending | running | done | failed

|

||||

output TEXT,

|

||||

retry_count INTEGER DEFAULT 0,

|

||||

max_retries INTEGER DEFAULT 2,

|

||||

created_at TEXT NOT NULL,

|

||||

updated_at TEXT NOT NULL

|

||||

);

|

||||

```

|

||||

|

||||

### Altered table: `steps`

|

||||

|

||||

Add two columns (with defaults for backwards compat):

|

||||

|

||||

```sql

|

||||

-- type: 'single' (default, current behavior) or 'loop'

|

||||

ALTER TABLE steps ADD COLUMN type TEXT NOT NULL DEFAULT 'single';

|

||||

-- loop_config: JSON blob, nullable. Only set when type='loop'.

|

||||

ALTER TABLE steps ADD COLUMN loop_config TEXT;

|

||||

-- current_story_id: tracks which story a loop step is currently working on

|

||||

ALTER TABLE steps ADD COLUMN current_story_id TEXT;

|

||||

```

|

||||

|

||||

Since we use `node:sqlite` (DatabaseSync), the migration approach is: check if columns exist, add if missing. Same pattern as existing `migrate()` in `db.ts`.

|

||||

|

||||

---

|

||||

|

||||

## Type Changes (`types.ts`)

|

||||

|

||||

```typescript

|

||||

// Add to existing types:

|

||||

|

||||

export type LoopConfig = {

|

||||

over: "stories";

|

||||

completion: "all_done";

|

||||

freshSession?: boolean; // default true

|

||||

verifyEach?: boolean; // default false

|

||||

verifyStep?: string; // step id to run after each iteration

|

||||

};

|

||||

|

||||

export type WorkflowStep = {

|

||||

id: string;

|

||||

agent: string;

|

||||

type?: "single" | "loop"; // NEW, default "single"

|

||||

loop?: LoopConfig; // NEW, only when type="loop"

|

||||

input: string;

|

||||

expects: string;

|

||||

max_retries?: number;

|

||||

on_fail?: WorkflowStepFailure;

|

||||

};

|

||||

|

||||

export type Story = {

|

||||

id: string;

|

||||

runId: string;

|

||||

storyIndex: number;

|

||||

storyId: string; // "US-001"

|

||||

title: string;

|

||||

description: string;

|

||||

acceptanceCriteria: string[];

|

||||

status: "pending" | "running" | "done" | "failed";

|

||||

output?: string;

|

||||

retryCount: number;

|

||||

maxRetries: number;

|

||||

};

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Step Operations Changes (`step-ops.ts`)

|

||||

|

||||

This is the core of the implementation. All loop logic lives here — agents don't know they're in a loop.

|

||||

|

||||

### `claimStep(agentId)` — Updated

|

||||

|

||||

```

|

||||

1. Find pending step for this agent (existing logic)

|

||||

2. If step.type === 'loop':

|

||||

a. Parse loop_config JSON

|

||||

b. If loop_config.over === 'stories':

|

||||

- Query stories table: next story with status='pending' for this run

|

||||

- If no pending story found:

|

||||

* Mark step as 'done'

|

||||

* Advance pipeline (existing logic)

|

||||

* Return { found: false }

|

||||

- Claim the story: set story status='running', set step.current_story_id

|

||||

- Build extra template vars:

|

||||

* {{current_story}} — formatted story block (id, title, desc, acceptance criteria)

|

||||

* {{current_story_id}} — "US-001"

|

||||

* {{current_story_title}} — "Add status field"

|

||||

* {{completed_stories}} — summary of done stories

|

||||

* {{stories_remaining}} — count of pending stories

|

||||

* {{verify_feedback}} — from run context (set by verifier on failure)

|

||||

* {{progress}} — contents of progress.txt from developer workspace

|

||||

- Merge extra vars into context, resolve template, return

|

||||

3. If step.type === 'single': existing logic unchanged

|

||||

```

|

||||

|

||||

### `completeStep(stepId, output)` — Updated

|

||||

|

||||

```

|

||||

1. Existing: save output, merge KEY:VALUE pairs into context

|

||||

2. NEW — Detect STORIES_JSON in output:

|

||||

- Find the line starting with "STORIES_JSON:"

|

||||

- Everything after that prefix (possibly multi-line) is JSON

|

||||

- Parse the array and INSERT into stories table

|

||||

- Each story gets: run_id from the step, sequential story_index, status='pending'

|

||||

3. If step is a loop step (type='loop'):

|

||||

a. Mark current story as 'done', save output to story

|

||||

b. Clear step.current_story_id

|

||||

c. Check loop_config.verify_each:

|

||||

- If true: set the verify step (by loop_config.verify_step) to 'pending'

|

||||

* Also save {{changes}} etc. in run context so verifier can see them

|

||||

* The loop step stays 'running' (not 'pending' yet — waiting for verify)

|

||||

- If false: check for more pending stories

|

||||

* More stories → set step back to 'pending' (next poll picks up next story)

|

||||

* No more stories → mark step 'done', advance pipeline

|

||||

4. If step is a single step: existing advance logic

|

||||

```

|

||||

|

||||

### Verify step completion (new behavior for verify-each)

|

||||

|

||||

When the verify step completes and it was triggered by a loop step's verify_each:

|

||||

|

||||

```

|

||||

1. If verify STATUS=done:

|

||||

- Check if more pending stories remain

|

||||

- If yes: set the loop step back to 'pending' (developer picks up next story)

|

||||

- If no: mark loop step 'done', advance pipeline past verify to next step

|

||||

- Clear verify_feedback from context

|

||||

2. If verify STATUS=retry (failure):

|

||||

- Set the current story back to 'pending'

|

||||

- Store verify ISSUES in context as {{verify_feedback}}

|

||||

- Set the loop step back to 'pending' (developer retries the story)

|

||||

- Increment story retry_count

|

||||

- If story retry_count >= max_retries: fail the story, fail the step, fail the run

|

||||

```

|

||||

|

||||

**How to detect "this verify completion was triggered by verify_each":**

|

||||

- Check if the verify step's run has a loop step with `verify_each: true` and `verify_step` matching the current step's step_id

|

||||

- Or: add a `triggered_by` field to the step record when setting it to pending

|

||||

|

||||

Recommendation: add a `triggered_by_loop TEXT` column to steps table (nullable). When verify-each sets the verify step to pending, it writes the loop step's ID here. On verify completion, check this field.

|

||||

|

||||

Actually simpler: just check if there's a loop step in this run with `verify_step` pointing to this step's step_id and the loop step is in 'running' status. No extra column needed.

|

||||

|

||||

### `failStep(stepId, error)` — Updated

|

||||

|

||||

```

|

||||

1. If step is a loop step:

|

||||

a. Fail the current story (increment retry_count)

|

||||

b. If story retries remain: story → 'pending', step stays 'pending'

|

||||

c. If story retries exhausted: story → 'failed', step → 'failed', run → 'failed'

|

||||

2. If step is a single step: existing logic

|

||||

```

|

||||

|

||||

### New: `getStories(runId)`

|

||||

|

||||

```typescript

|

||||

function getStories(runId: string): Story[] {

|

||||

// Return all stories for a run, ordered by story_index

|

||||

}

|

||||

```

|

||||

|

||||

### New: `getCurrentStory(stepId)`

|

||||

|

||||

```typescript

|

||||

function getCurrentStory(stepId: string): Story | null {

|

||||

// Get the story currently being worked on by a loop step

|

||||

// Uses step.current_story_id

|

||||

}

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Run Creation Changes (`run.ts`)

|

||||

|

||||

When inserting steps, persist the new fields:

|

||||

|

||||

```typescript

|

||||

const stepType = step.type ?? "single";

|

||||

const loopConfig = step.loop ? JSON.stringify(step.loop) : null;

|

||||

// Add to INSERT: type, loop_config columns

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Workflow Spec Changes (`workflow-spec.ts`)

|

||||

|

||||

### Parsing

|

||||

|

||||

Read `type` and `loop` from YAML step definitions. Validate:

|

||||

- If `type: loop`, `loop` must be present

|

||||

- `loop.over` must be `"stories"` (only supported value for now)

|

||||

- `loop.completion` must be `"all_done"`

|

||||

- If `loop.verifyEach`, `loop.verifyStep` must reference a valid step id

|

||||

- The referenced verify step must exist in the steps list

|

||||

|

||||

### YAML field mapping

|

||||

|

||||

```yaml

|

||||

# In workflow.yml

|

||||

type: loop → step.type = "loop"

|

||||

loop:

|

||||

over: stories → loopConfig.over = "stories"

|

||||

completion: all_done → loopConfig.completion = "all_done"

|

||||

verify_each: true → loopConfig.verifyEach = true

|

||||

verify_step: verify → loopConfig.verifyStep = "verify"

|

||||

```

|

||||

|

||||

Note: YAML uses snake_case, TypeScript uses camelCase. Convert during parsing.

|

||||

|

||||

---

|

||||

|

||||

## Agent Cron Changes (`agent-cron.ts`)

|

||||

|

||||

### Frequency

|

||||

|

||||

Change `EVERY_MS` from `900_000` (15 min) to `300_000` (5 min).

|

||||

|

||||

Make it configurable per-workflow:

|

||||

|

||||

```yaml

|

||||

# In workflow.yml (optional)

|

||||

cron:

|

||||

interval_ms: 300000 # 5 minutes

|

||||

```

|

||||

|

||||

If not specified, default to 300_000.

|

||||

|

||||

### Prompt

|

||||

|

||||

No changes needed to the agent cron prompt. The `step claim` / `step complete` / `step fail` CLI commands handle all the loop logic server-side. The agent doesn't know it's in a loop — it just claims work, does it, reports completion. Same prompt works for single and loop steps.

|

||||

|

||||

---

|

||||

|

||||

## CLI Changes (`cli.ts`)

|

||||

|

||||

### New command: `antfarm step stories <run-id>`

|

||||

|

||||

Lists all stories for a run:

|

||||

|

||||

```

|

||||

$ antfarm step stories abc123

|

||||

US-001 [done] Add status field to database

|

||||

US-002 [done] Display status badge on task cards

|

||||

US-003 [running] Add status toggle to task list rows

|

||||

US-004 [pending] Filter tasks by status

|

||||

```

|

||||

|

||||

### Updated: `antfarm workflow status`

|

||||

|

||||

Include story progress in status output when stories exist.

|

||||

|

||||

---

|

||||

|

||||

## Cross-Session Memory: File-Based

|

||||

|

||||

### Where files live

|

||||

|

||||

Developer agent workspace: `/Users/scout/.openclaw/workspaces/workflows/feature-dev/agents/developer/`

|

||||

|

||||

This directory contains: `AGENTS.md`, `SOUL.md`, `IDENTITY.md`, `TOOLS.md`, `USER.md`, `HEARTBEAT.md`

|

||||

|

||||

We add: `progress.txt` (created by the developer agent on first story), `MEMORY.md` (optional, created if agent finds it useful), `archive/` (created on run completion).

|

||||

|

||||

### progress.txt

|

||||

|

||||

**Created by:** Developer agent during first story implementation.

|

||||

**Location:** Developer agent workspace directory.

|

||||

**Lifecycle:** Created fresh per run. Archived on run completion.

|

||||

|

||||

Format:

|

||||

```markdown

|

||||

# Progress Log

|

||||

Run: <run-id>

|

||||

Task: <task description>

|

||||

Started: <timestamp>

|

||||

|

||||

## Codebase Patterns

|

||||

- Pattern 1 discovered during implementation

|

||||

- Pattern 2

|

||||

(consolidated reusable patterns — updated by developer after each story)

|

||||

|

||||

---

|

||||

|

||||

## <timestamp> - US-001: <title>

|

||||

- What was implemented

|

||||

- Files changed

|

||||

- **Learnings:** What was discovered about the codebase

|

||||

---

|

||||

|

||||

## <timestamp> - US-002: <title>

|

||||

- What was implemented

|

||||

- Files changed

|

||||

- **Learnings:** ...

|

||||

---

|

||||

```

|

||||

|

||||

### How other agents access progress

|

||||

|

||||

The developer agent writes `progress.txt` to its own workspace. Other agents (verifier, tester) need to see it.

|

||||

|

||||

**Solution:** When `claimStep()` resolves template variables for any step in a run that has stories, it reads the developer workspace's `progress.txt` and injects its contents as `{{progress}}`. This way the verifier/tester prompt can include:

|

||||

|

||||

```yaml

|

||||

input: |

|

||||

...

|

||||

PROGRESS LOG:

|

||||

{{progress}}

|

||||

```

|

||||

|

||||

The `claimStep()` function needs to know the developer workspace path. It can derive this from:

|

||||

- The loop step's agent_id → workflow agent config → workspace path

|

||||

- Or: store the developer workspace path in run context during planning

|

||||

|

||||

Recommendation: The planner step outputs `REPO: /path/to/repo`. The developer's workspace path is deterministic from the workflow config. Have `claimStep()` look up the workspace path from the agent config for the loop step's agent.

|

||||

|

||||

Actually simpler: the developer agent writes progress.txt in its workspace. The workspace path is known from the OpenClaw config (`agents.list[].workspace`). Add a helper `getAgentWorkspace(agentId)` that reads the config and returns the path.

|

||||

|

||||

Even simpler: store the progress.txt path in run context. When the loop step first claims a story, set `context.progress_file = "<workspace>/progress.txt"`. Then `claimStep()` reads that file for `{{progress}}`.

|

||||

|

||||

**Final approach:** Add a `resolveProgressFile(runId)` helper that:

|

||||

1. Finds the loop step for this run

|

||||

2. Gets its agent_id

|

||||

3. Looks up that agent's workspace from the OpenClaw config

|

||||

4. Returns `<workspace>/progress.txt`

|

||||

|

||||

Then in `claimStep()` for any step (not just loops), if the run has stories, inject `{{progress}}` by reading that file.

|

||||

|

||||

### AGENTS.md updates

|

||||

|

||||

Developer agent updates its own `AGENTS.md` with structural codebase knowledge. This persists across runs. Guidance for what to add:

|

||||

|

||||

- Project stack/framework info

|

||||

- How to run tests

|

||||

- Key file locations and patterns

|

||||

- Gotchas and non-obvious dependencies

|

||||

|

||||

These go in a `## Codebase Knowledge` section that the agent appends to.

|

||||

|

||||

### MEMORY.md

|

||||

|

||||

Optional. If the developer agent creates one, OpenClaw auto-loads it on each session. Could be used for longer-term memory across multiple runs. Not required for the loop mechanism to work.

|

||||

|

||||

### Archiving

|

||||

|

||||

When a run completes (final step done → run status = 'completed'):

|

||||

|

||||

The `completeStep()` function, after marking a run as completed, should trigger archiving:

|

||||

|

||||

1. Find the developer workspace for this run's workflow

|

||||

2. If `progress.txt` exists:

|

||||

- Create `archive/<run-id>/`

|

||||

- Copy `progress.txt` → `archive/<run-id>/progress.txt`

|

||||

- Truncate `progress.txt` (or delete it — next run creates a fresh one)

|

||||

|

||||

This can be a separate function `archiveRunProgress(runId)` called from `completeStep()` when `runCompleted: true`.

|

||||

|

||||

---

|

||||

|

||||

## New Agent: Planner

|

||||

|

||||

### Files to create

|

||||

|

||||

```

|

||||

workflows/feature-dev/agents/planner/AGENTS.md

|

||||

workflows/feature-dev/agents/planner/SOUL.md

|

||||

workflows/feature-dev/agents/planner/IDENTITY.md

|

||||

```

|

||||

|

||||

### AGENTS.md (Planner)

|

||||

|

||||

Should contain:

|

||||

- Role: decompose tasks into user stories

|

||||

- Story sizing rules (must fit in one context window)

|

||||

- Ordering rules (dependencies first: schema → backend → frontend)

|

||||

- Acceptance criteria rules (must be verifiable, always include "Typecheck passes")

|

||||

- Output format (STATUS, REPO, BRANCH, STORIES_JSON)

|

||||

- Max 20 stories rule

|

||||

- Examples of well-sized vs too-big stories

|

||||

- Instructions to explore the codebase before decomposing

|

||||

|

||||

Key content to borrow from Ralph's PRD skill (`/tmp/ralph/skills/ralph/SKILL.md`):

|

||||

- Story sizing section ("Right-sized stories" vs "Too big")

|

||||

- Acceptance criteria section ("Must Be Verifiable")

|

||||

- Story ordering section ("Dependencies First")

|

||||

|

||||

### SOUL.md (Planner)

|

||||

|

||||

Analytical, thorough. Takes time to understand the codebase before decomposing. Not a coder — a planner. Thinks in terms of dependencies, risk, and incremental delivery.

|

||||

|

||||

### IDENTITY.md (Planner)

|

||||

|

||||

```markdown

|

||||

# Identity

|

||||

Name: Planner

|

||||

Role: Decomposes tasks into user stories

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Updated Agent: Developer

|

||||

|

||||

### AGENTS.md changes

|

||||

|

||||

Add sections:

|

||||

|

||||

```markdown

|

||||

## Story-Based Execution

|

||||

|

||||

You work on ONE user story per session. A fresh session is started for each story.

|

||||

|

||||

### Each Session

|

||||

|

||||

1. Read `progress.txt` — especially the Codebase Patterns section at the top

|

||||

2. Check the branch, pull latest

|

||||

3. Implement the story described in your task input

|

||||

4. Run quality checks

|

||||

5. Commit: `feat: <story-id> - <story-title>`

|

||||

6. Append to progress.txt (see format below)

|

||||

7. Update Codebase Patterns in progress.txt if you found reusable patterns

|

||||

8. Update AGENTS.md if you learned something structural about the codebase

|

||||

|

||||

### progress.txt Format

|

||||

|

||||

Append this after completing a story:

|

||||

|

||||

## <date/time> - <story-id>: <title>

|

||||

- What was implemented

|

||||

- Files changed

|

||||

- **Learnings:** codebase patterns, gotchas, useful context

|

||||

---

|

||||

|

||||

### Codebase Patterns

|

||||

|

||||

If you discover a reusable pattern, add it to the `## Codebase Patterns` section at the TOP of progress.txt. Only add patterns that are general and reusable, not story-specific.

|

||||

|

||||

### AGENTS.md Updates

|

||||

|

||||

If you discover something structural (not story-specific), add it to your AGENTS.md:

|

||||

- Project stack/framework

|

||||

- How to run tests

|

||||

- Key file locations

|

||||

- Dependencies between modules

|

||||

- Gotchas

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Updated Agent: Verifier

|

||||

|

||||

### AGENTS.md changes

|

||||

|

||||

Update to reflect per-story verification:

|

||||

|

||||

```markdown

|

||||

## Per-Story Verification

|

||||

|

||||

You verify ONE story at a time, immediately after the developer completes it.

|

||||

|

||||

### What to Check

|

||||

|

||||

1. Code exists and is not just TODOs or placeholders

|

||||

2. Each acceptance criterion for the story is met

|

||||

3. No obvious incomplete work

|

||||

4. Typecheck passes

|

||||

5. If the story has "Verify in browser" criterion, do that

|

||||

|

||||

### Context Available

|

||||

|

||||

- The story details (in your task input)

|

||||

- What the developer changed (in your task input)

|

||||

- The progress log (in your task input as {{progress}})

|

||||

- The actual code (in the repo on the branch)

|

||||

|

||||

### Output

|

||||

|

||||

Pass: STATUS: done + VERIFIED: what you confirmed

|

||||

Fail: STATUS: retry + ISSUES: what's missing/broken (this goes back to the developer)

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Updated Workflow YAML

|

||||

|

||||